Imagine you’re locking a box with a secret key. In cloud computing, your files ride on shared systems, so data encryption in cloud computing explained is how you keep that box locked until only the right people can open it.

Cloud storage is growing fast, and so is the need to protect data in everyday workflows, for both businesses and individuals. When encryption works, it scrambles your info into unreadable code, then lets authorized users decrypt it later. That means even if someone gets a copy, they can’t make sense of it.

Next, you’ll see how encryption works in practice, the main types you’ll hear about, where they’re used, plus the benefits and trade-offs you should know. How safe is your cloud data right now?

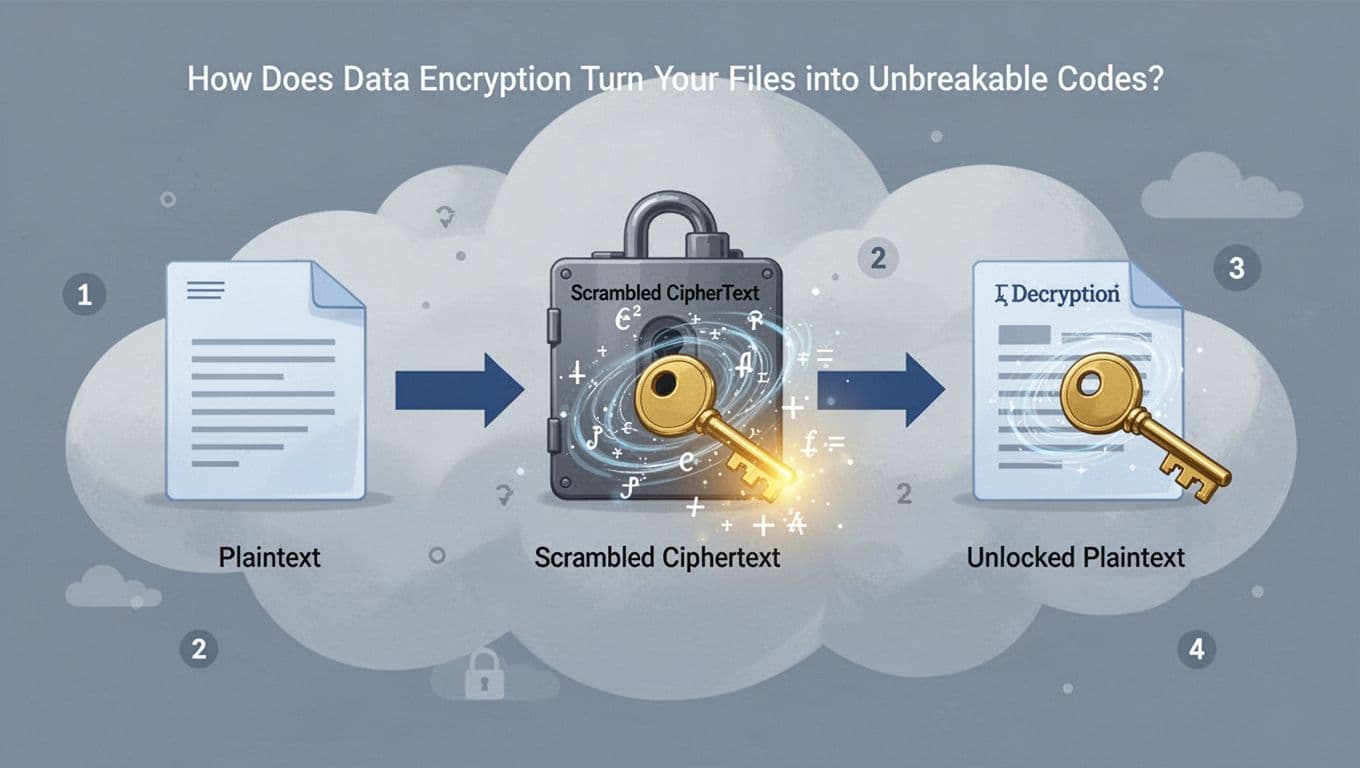

How Does Data Encryption Turn Your Files into Unbreakable Codes?

Encryption works like a tamper-resistant mailbox. First, your readable data (plaintext) goes in. Next, encryption math scrambles it into ciphertext. After that, only the right key can put the pieces back together.

Here’s the core process, step by step:

- Start with plaintext (a file, a note, or a login payload you can read).

- Choose an encryption algorithm (the rules for scrambling).

- Provide a key (the secret ingredient that makes the scrambling reversible).

- Encrypt to create ciphertext (the “locked” unreadable version).

- Decrypt using the key to restore the original plaintext.

In cloud setups, this happens automatically and consistently, so you don’t have to treat every file like a special case. Most providers use AES-256 for bulk encryption at rest, and TLS for data in transit. For example, AWS, Azure, and Google Cloud commonly rely on these standards, then manage keys with their key services.

That’s the “unbreakable” part in real life: it’s not magic. It’s that without the key, the ciphertext looks like random noise, even if an attacker steals the file.

Symmetric Encryption: Fast and Simple for Big Data Loads

Symmetric encryption uses one shared key. That same key locks the file and unlocks it. So yes, it’s simple, and that’s why it shines for large files in cloud storage and backups.

A good mental picture is a single lock and matching key. If you lose the key, the box stays closed. If someone else gets the key, they can open everything it protects. Because of that, the “hard part” is often key sharing and key protection, not the encryption itself.

In clouds, AES-256 is the common go-to for symmetric encryption. You can think of AES as the scrambling method, and AES-256 as the version with a strong key size. If you want a clear explanation of what AES-256 is and why it matters, see AES-256 encryption overview.

Benefits of symmetric encryption for big data:

- Speed: Encrypts and decrypts quickly, even for huge backups.

- Efficiency: Works well for “encrypt everything” storage defaults.

- Consistency: Easy to apply across datasets and services.

- Strong protection: With the correct key, data comes back cleanly.

Example: When a provider encrypts cloud backups, symmetric encryption keeps stored backups unreadable if stolen, while the service can still decrypt them for authorized restores.

Asymmetric Encryption: Extra Security with Public-Private Key Pairs

Asymmetric encryption uses two keys. One key is public, meaning anyone can use it. The other is private, meaning only the owner can use it.

Here’s the analogy: the public key is like a mailbox address. You can drop off a locked note using that address. Only the owner has the matching private key to open it.

It’s slower than symmetric encryption, but it solves a key problem: safe key sharing. Instead of sharing one secret key with everyone, you share a public key. That lets strangers encrypt data for you without gaining the ability to decrypt it.

This is closely tied to HTTPS and TLS, because those secure web connections depend on public key cryptography for handshakes and trust. If you want a beginner-friendly breakdown, see what asymmetric encryption is.

In real cloud apps, you’ll often see this flow:

- A user or service encrypts data using the recipient’s public key.

- The recipient decrypts it using the private key only they control.

- The same session may then switch to symmetric encryption for speed.

So, compared to symmetric encryption’s shared-secret simplicity, asymmetric encryption adds safer sharing without giving away the unlock key.

Where Does Encryption Guard Your Cloud Data Every Day?

Encryption doesn’t guard your cloud data in one single moment. Instead, it works in layers, in three places: when data sits, when data moves, and when data runs. If one layer fails, a weak spot can show up.

Think of it like airport security. You need locks on your suitcase (at rest), secure travel rules for boarding (in transit), and safe handling once you land (in use). That full coverage is why strong cloud encryption matters.

Data at Rest: Shielding Stored Files from Thieves

Data at rest is what you store in the cloud, like files in Amazon S3, database records, and snapshots. The key idea is simple: even if someone steals the storage media, they should still see only scrambled ciphertext.

In practice, cloud storage encryption works like this:

- Your provider encrypts data before it writes it to disks.

- The encrypted bits sit on drives in unreadable form.

- When you (or an authorized service) needs the data, the system decrypts it using the right key.

This is where features like Azure data encryption at rest come in. Microsoft explains that encryption at rest helps protect data across many storage types in Azure, including blobs and managed database services, with platform-managed options available through their security tools. See Azure data encryption at rest.

Even better, many providers use strong, common defaults. For example, you may see AES-256 used for stored data encryption. If an attacker gets the raw files, the encryption still blocks them, because the key never travels with the data in a usable form.

From a compliance angle, encryption at rest is often a baseline requirement. Auditors care because it reduces the impact of lost backups, improperly handled snapshots, or unauthorized storage access. Still, the story doesn’t end at “encrypted.” You also want strong key management. If your encryption keys live alongside your data, attackers can often undo the whole setup.

So, when you evaluate cloud encryption, ask:

- Is encryption on by default or only if you configure it?

- Are keys stored and protected using a separate key service?

- Can you prove policies for stored data protection?

Data in Transit: Locking Down Info as It Travels Networks

Data in transit is the data that travels between your device and the cloud. It includes uploads, API calls, email attachments, and service-to-service traffic. In this phase, encryption turns a readable message into a protected tunnel, so outsiders can’t spy or alter it.

Most cloud traffic security comes down to SSL/TLS, which powers HTTPS. With TLS, the sender and receiver negotiate an encrypted session, then exchange data inside that secure channel. Importantly, encryption settings can change per session, so attackers can’t rely on a stable “pattern” across transfers.

What does this protect, day to day?

- You posting a file to cloud storage

- An app calling a database API

- Authentication tokens moving between services

- Monitoring systems sending logs to a central endpoint

If you want a provider-backed example, AWS documents guidance for enforcing encryption in transit, focused on meeting security and compliance needs. Their SEC09-BP02 Enforce encryption in transit describes how encryption helps maintain confidentiality as data travels, including across untrusted networks.

Meanwhile, specific services also use TLS for in-transit links. For example, Amazon Athena uses TLS between Athena, Amazon S3, and customer applications that access it. AWS notes this in Encryption in transit.

A small gotcha: encryption in transit does not fix sloppy endpoints. If an app allows fallback to unencrypted connections, someone could intercept or downgrade traffic. That’s why “only allow encrypted connections” policies matter in real deployments.

Here’s a practical way to think about it: encryption in transit is your front door lock. It matters at the exact time your data is most exposed to interception.

Data in Use: Securing Info in Your Computer’s Temporary Memory

Data in use is the hardest to explain and, for many threats, the most misunderstood. It refers to data while your system processes it, stored in memory (RAM) or handled by running code.

Once data gets into RAM, classic encryption at rest and in transit no longer protects it the same way. The system needs the plain data to compute results. That’s why attackers sometimes focus on memory attacks, stolen snapshots, or unsafe handling of sensitive values in running workloads.

This is why “encryption in use” gets attention now. Newer protections aim to reduce the time and places where plaintext sits in memory. You might hear about approaches like trusted execution environments, hardened memory handling, and techniques such as homomorphic encryption for certain compute patterns.

Even some industry writing frames this as the “invisible battlefield” in RAM, since encryption often ends once the data must be processed. See Data in Use: The Invisible Battlefield Hiding in Your RAM.

Where you see this matter most:

- Sensitive data processed for analytics

- Systems that run on shared infrastructure

- AI or ML pipelines that touch private data

- Finance or health workloads with strict exposure limits

In cloud setups, “data in use” can mean more than just RAM. It can include temporary storage, intermediate results, cached values, and logs created during processing. So, the best defense pairs encryption with controls like:

- Least-privilege access to workloads

- Locked-down debug settings and logs

- Secure memory handling features when available

- Clear policies for how long sensitive data stays in memory

Most organizations start with at rest and in transit. Then they add stronger controls for in use, as requirements get stricter and workloads get more sensitive.

Why Use Cloud Encryption? Benefits That Protect and Save You

Cloud encryption isn’t just a “nice-to-have” control. It turns your data into something attackers can steal, but usually cannot use. In other words, it helps protect your privacy and can cut the damage if a breach ever happens.

Think of it like keeping your valuables in a safe inside a locked room. Even if someone breaks into the room, the safe still holds the real secret. That matters more in the cloud, where your data moves across systems and touches more services than you manage day to day.

The biggest benefit: stolen data stays unreadable

When encryption works, your data turns into ciphertext. That ciphertext looks like random noise, even if a bad actor downloads it. As a result, theft becomes far less useful.

This benefit shows up in a few common situations:

- Lost devices (laptops, phones, USB drives) that may hold cached data

- Stolen backups pulled from storage snapshots

- Misconfigured buckets or exposed storage objects

- Compromised accounts where an attacker tries to grab raw files

Encryption doesn’t mean you will never face incidents. However, it can make the stolen material nearly worthless without the key.

Also, it reduces how much an attacker can monetize your data quickly. Instead of turning files into usable records, they get encrypted blobs. So, the clock runs against them.

It supports GDPR and HIPAA expectations for protecting sensitive data

In the US, many organizations handle data that must follow strict rules. HIPAA requires strong protections for ePHI, and encryption is often part of the required safeguard mix. GDPR also expects strong security controls for personal data, especially where the risk is high.

If you want a clear baseline, you can compare how these frameworks treat cloud data protection and encryption needs. For an accessible comparison, see GDPR vs HIPAA key differences.

It’s not just about passing audits. Encryption gives you a stronger story for regulators and customers. It shows you protected sensitive data with a real technical control, not just a policy document.

A key takeaway is this: encryption helps reduce the impact of exposure, and it helps you show due care when auditors ask hard questions.

If you store sensitive data in the cloud, encryption often becomes the simplest way to meet “protect it” expectations.

It boosts privacy and gives you more control over access

Even with strong identity and network controls, you still need a protection layer that follows the data itself. Encryption does that. It gives you an extra barrier that does not depend only on where the attacker is located.

When you pair encryption with good key management, you can limit who can read what. For example, you can ensure that:

- Only authorized services can decrypt data

- Keys rotate on schedule

- Access can be tracked and audited

That means your privacy posture improves. It also helps you reduce internal risk, because over-permissioned users still cannot read encrypted content.

If you manage backups and archives, encryption also helps. Those “cold” copies often live longer than a system session. Yet they can still contain personal and sensitive data. Encrypting them protects you across the whole lifecycle.

It secures backups and reduces breach costs

Backups often sit at the center of disaster recovery. But they also can become an attacker’s prize. If backups contain sensitive data and are not encrypted, the breach impact grows fast.

Encryption helps in two ways:

- It limits what attackers can extract from backup files.

- It can speed recovery confidence, because restored data remains protected.

It also helps with the financial side. Breaches can trigger notification costs, credit monitoring, incident response work, and regulatory penalties. While encryption does not guarantee “no costs,” it can reduce the scale of what you must disclose.

If you want a real-world lesson about cloud breach risk, review Capital One’s 2019 cloud credential breach case study. The incident highlights how access problems and exposed systems can lead to massive exposure, even when organizations think controls exist.

It improves your odds of avoiding reportable exposure

Not every encryption setup reduces the same risk. Still, the pattern is consistent: encryption can reduce how much readable data ends up in the hands of attackers.

Also, encryption supports security programs that use multiple layers. For example, strong cloud encryption often pairs with:

- Least-privilege access

- MFA for admin access

- Tight logging and monitoring

- Secure storage configuration checks

When you stack these controls, you reduce the chance of a full “data in the clear” outcome. In other words, encryption helps you stop the worst-case path from becoming real.

To ground the “why now” side with broader cloud risk context, you can look at cloud security statistics in 2026. The point isn’t to scare you. It’s to show that cloud incidents keep rising, so controls like encryption matter more each year.

Quick self-check: are you getting the real benefit?

Encryption only protects you when it’s actually in place where it matters. Before you trust the cloud, confirm these basics:

- Encryption is enabled by default for stored data

- Data in transit uses TLS (not optional downgrades)

- Keys are protected through a separate key service

- You can prove encryption settings during reviews

When you answer these, you turn encryption from a checkbox into a real shield.

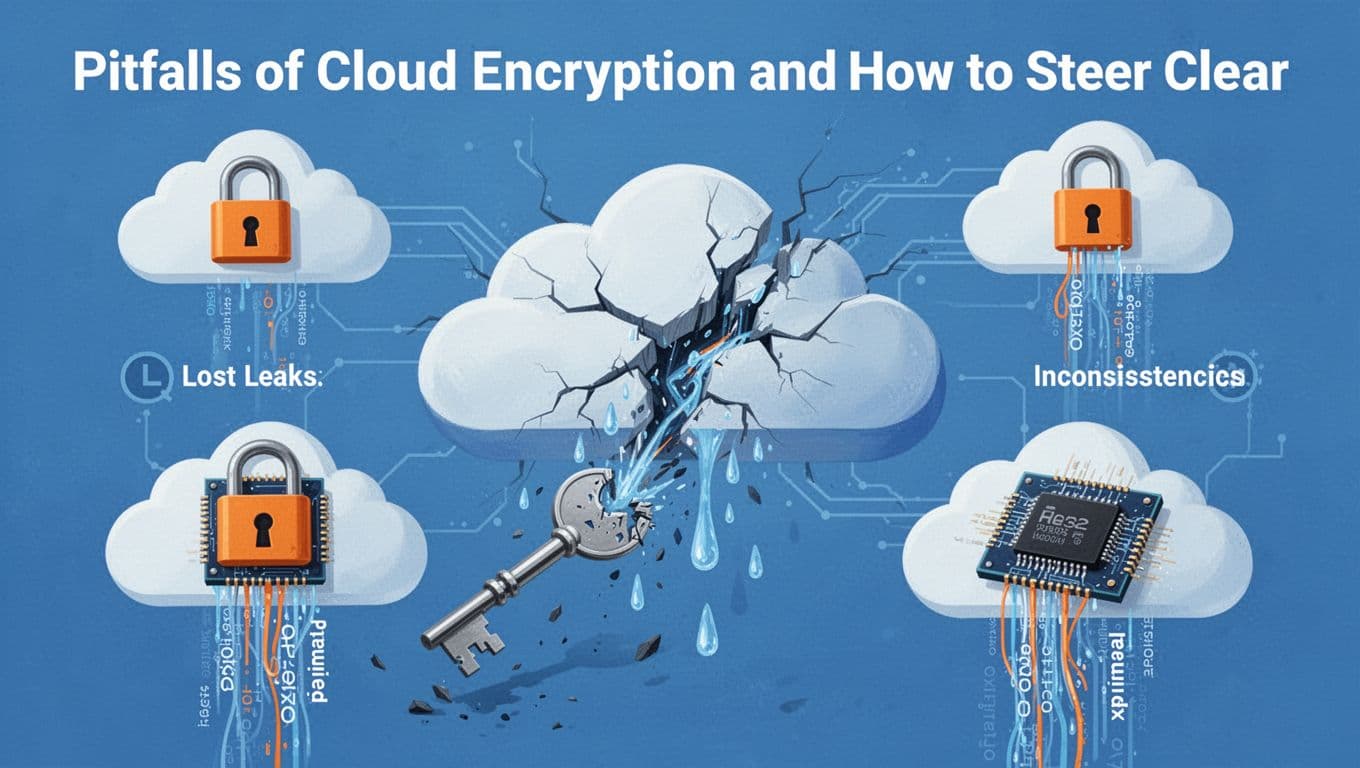

Pitfalls of Cloud Encryption and How to Steer Clear

Cloud encryption sounds simple: lock data, hand out keys, stay safe. In practice, weak setup, messy operations, and unclear trust boundaries can turn encryption into a false comfort. You don’t have to “avoid encryption.” You need to avoid the mistakes around it.

Lost keys can mean lost data (and panic restores)

The biggest risk is also the most human: losing the keys. When keys vanish, ciphertext stays scrambled. That means backups become useless, incident response slows down, and recovery turns into guessing and workarounds.

This pitfall shows up in common ways:

- Teams rotate or delete keys without updating apps and restore workflows

- Keys get exported into spreadsheets or break into unclear ownership

- Services fail because the key service policy changed

- Someone grants access “temporarily,” then the temporary access expires

Key management is where encryption projects often fail. One reason is simple: most teams treat key handling as plumbing, not as a core system. For a clear view of why key control drives outcomes, see Key management’s real problem .

Also watch your failure plan. Ask how you restore data if:

- A key region becomes unavailable

- A grant expires during an outage

- A service account is locked out during an incident

Encryption protects data, but it also makes availability depend on key continuity.

“In use” encryption gaps can expose plaintext in memory

Encryption at rest and in transit only gets you so far. During processing, data often exists as plaintext in memory (RAM), in temporary files, or inside logs. If an attacker finds a memory leak or a disclosure bug, they can steal what encryption already “finished” protecting.

This is why “encrypt everything” can still fail. Modern workloads, serverless functions, and sandboxed code can create sharp edges where secrets pop out during crashes, debug runs, or memory disclosure flaws.

Research on memory leakage attacks for serverless platforms shows why this matters, especially when isolation isn’t airtight. For background, read LeakLess and memory leakage risk .

Practical guardrails include:

- Minimize sensitive data in logs and traces

- Disable verbose debug output in production

- Treat crash dumps and memory captures as sensitive

- Use least privilege so workloads cannot read more than needed

Multi-cloud and inconsistent policies create hidden “unencrypted” pockets

Many organizations encrypt one storage path, then forget the rest. In multi-cloud setups, encryption policies drift across providers, regions, accounts, and backup services. Result: data looks encrypted in one place, but backup buckets, exports, replicas, and other “side copies” remain unprotected.

This isn’t a theory. Encryption gaps often come from operational reality, scattered ownership, and audit trails that don’t line up across clouds. If you want a grounding on how multi-cloud security complexity creates blind spots, see Multi-cloud security risks .

Two more problems make it worse:

- Key access visibility is fragmented, so no one sees who can decrypt

- Different teams set different defaults for the same “type” of data

You can tighten this with stronger key governance and access oversight. For an example of why centralized visibility matters, see cloud key management access visibility .

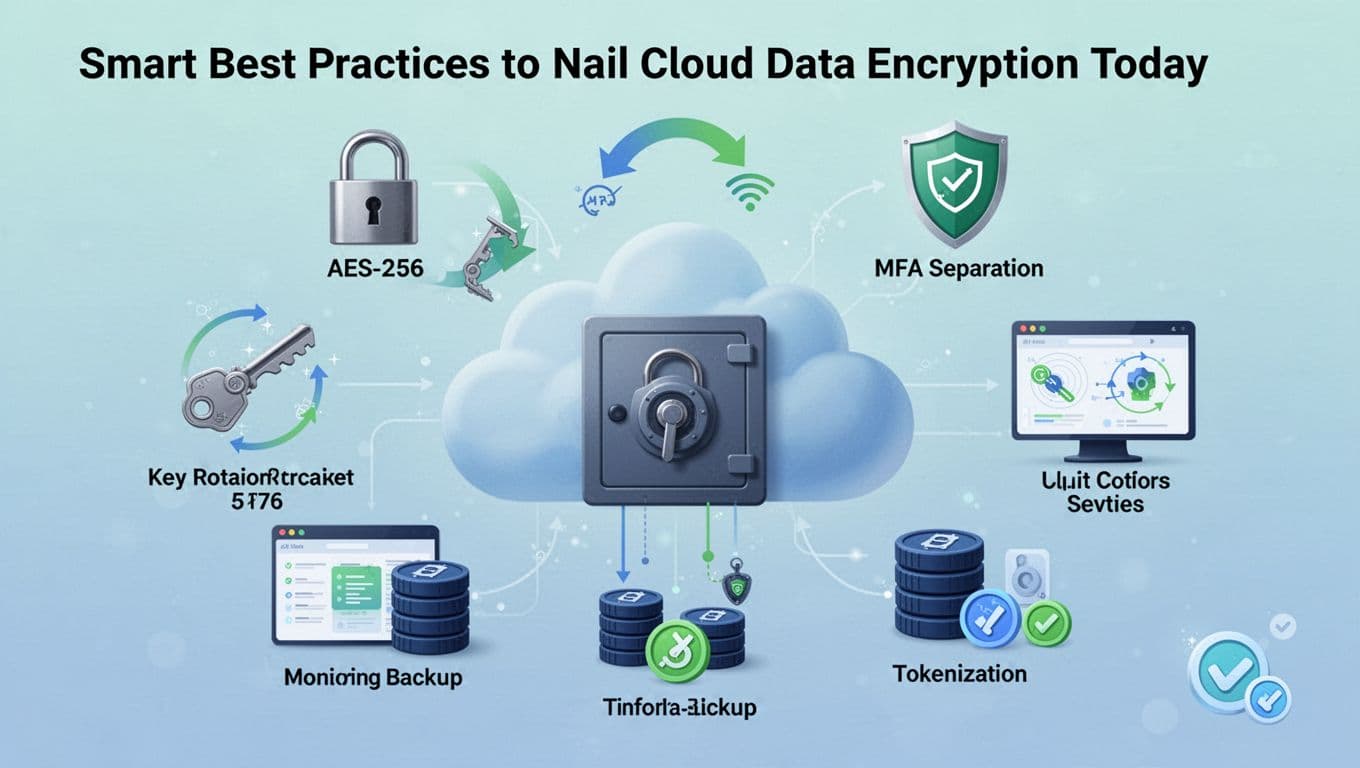

Smart Best Practices to Nail Cloud Data Encryption Today

To nail cloud encryption, stop thinking of it as a checkbox. Treat it like a routine you run on purpose. You want strong crypto, clean key handling, and consistent rules across every place data shows up.

When you get this right, encryption does two things at once. It limits what attackers can read, and it reduces how much harm you face if something goes wrong. Still, the small details matter, especially around keys, access, and backups.

Use AES-256 as your default, then verify it everywhere

Start with a simple baseline: AES-256 for data at rest. Most cloud providers support it, and it fits how cloud storage works. However, “supported” isn’t the same as “enabled.”

So, verify encryption at the service level, not just at the account level. Check buckets, volumes, snapshots, database storage, and backup copies. Also, confirm whether the provider-managed option matches your risk needs.

Here’s a practical way to keep it consistent:

- Turn on encryption by default for every storage type you use.

- Use AES-256 for stored data encryption unless you have a specific reason to choose otherwise.

- Prove it in audits, not just in screenshots from setup day.

- Cover backups and replicas, because attackers love side copies.

If you need a clear reference model for the three encryption states, this guide on data at rest, in transit, and in use is a solid starting point: Cloud Data Encryption: At Rest, In Transit, and In Use | Cloud Computing Authority.

Encrypt everything sensitive, not just the obvious files

It’s easy to encrypt the “main” dataset and miss the rest. Yet the leaks often hide in the boring places: exports, logs, caches, temp files, and third-party systems.

Think of your cloud like a kitchen. You lock the pantry, but what about the fridge, spice jar, and takeout containers? Sensitive data can spread across many containers fast.

A strong rule is encrypt everything sensitive, then decide what must stay readable by whom. In practice, that means you should treat these data types as in-scope:

- Backups, snapshots, and images (including test and staging copies)

- Database exports and data warehouse loads

- Object storage replicas across regions or accounts

- Data in logs and traces (mask secrets, then encrypt where possible)

- Managed service outputs (reports, reports caches, and analytic extracts)

- Customer files and attachments moving through apps

Also, watch out for “temporary” workflows. Data often becomes sensitive during import, processing, and ETL steps. After all, encryption at rest won’t help if your export lands unencrypted in a working bucket.

Separate key duties, rotate keys, and treat keys like crown jewels

Encryption strength is only as good as your keys. If your keys get mishandled, encryption becomes decoration.

Modern best practice is key rotation on schedule and key separation by function or sensitivity level. Keep the ability to decrypt limited to the narrowest set of services and roles.

Key handling works best when you do three things:

- Use a key management service (KMS) instead of storing keys in app code.

- Rotate keys automatically using provider tooling or policy.

- Separate duties so no single team can do everything.

You also want real boundaries between “who can use keys” and “who can manage keys.” For example, a developer might deploy apps, but they shouldn’t directly control decryption rights. That’s not paranoia, that’s basic hygiene.

If an attacker can access your keys, the encryption layer no longer protects you.

If you want a provider-neutral guide to the key side, use a dedicated resource on key management practices like: Best Practices for Public Key and Private Key Management in 2026.

Use MFA for key access, then monitor every decrypt attempt

Next, lock down who can touch encryption controls. MFA for key access matters because key access often sits behind admin roles.

Then add visibility. Monitoring should cover both the “happy path” and the odd events. That includes decrypt requests, policy changes, and key access patterns over time.

A practical monitoring setup usually includes:

- Audit logs for decrypt, encrypt, and key policy changes

- Alerts for unusual key usage spikes

- Access reviews for any role granted decrypt rights

- Retention rules that protect audit logs too

Also watch service accounts. Many decrypt calls come from automation. If an attacker steals credentials, they can pull plaintext through the “legit” route. In other words, encryption doesn’t stop stolen tokens, it just limits what the token can do.

Force strong TLS for data in transit (and block weak fallbacks)

For traffic moving between clients and cloud services, aim for TLS 1.2 or higher. If you allow downgrades or old ciphers, attackers can try to squeeze into gaps.

So, enforce policies that do two things. First, they require encryption. Second, they remove weak options.

In real deployments, TLS enforcement can live at multiple layers:

- Load balancers and API gateways

- Service-to-service calls

- Database and storage endpoints

- Authentication flows

If you want a concrete example of how cloud guidance frames “encryption in transit” as a security control, AWS publishes a clear reference here: SEC09-BP02 Enforce encryption in transit.

Standardize encryption across accounts, regions, and cloud services

Encryption drift is common. One team flips settings in one region, another team leaves a bucket default in a different account. Over time, you end up with “encrypted pockets” and “unencrypted pockets.”

Your fix is standardization. Treat encryption settings like infrastructure code, not tribal knowledge. Also, unify backups so they inherit the same controls as your primary data.

This is where centralized key management becomes a big win. With one consistent key strategy, you reduce gaps and make audits easier.

In addition, you should align these areas:

- Encryption settings for storage, databases, and backups

- KMS key policies across regions

- Role permissions for encrypt and decrypt

- Token lifetimes for apps that request plaintext

- Data retention and disposal rules for decrypted outputs

Plan for “harvest now, decrypt later” and build crypto agility

A real 2026 trend is more focus on crypto agility. Attackers may try to steal encrypted data today and decrypt it later if cryptography weakens.

That creates two tasks for your roadmap:

- Prepare for post-quantum cryptography (PQC) migration where it matters

- Build systems that can swap algorithms without rewriting everything

Look for providers and libraries that support algorithm upgrades. Also, design your app so encryption settings can change through configuration, not code refactors.

Meanwhile, tokenization can help when you need to reduce how often plaintext exists at all. Instead of moving sensitive raw data around, you store tokens and keep the mapping locked down. Combined with encryption, it adds another layer of control.

If you want more practical “encryption everywhere” implementation thinking, AWS-focused guidance can help you structure the approach: How to Implement Encryption Everywhere on AWS.

Don’t forget data in use: confidential compute and safer processing

Even with perfect encryption at rest and in transit, data can become readable while apps process it. That’s where “data in use” controls matter.

In cloud terms, look at confidential computing and secure processing modes. These options aim to keep data protected while it runs, even from certain privileged users.

You don’t need confidential compute for every workload. Still, it fits well when you handle:

- highly sensitive customer data

- regulated data with strict access rules

- workloads that depend on strong isolation

Also, reduce plaintext spillover. Limit debug logs, mask secrets, and avoid writing sensitive values into temp files.

In short, encrypt everywhere, then add “data in use” where your risk demands it.

Conclusion

Cloud data encryption keeps your files unreadable to anyone without the key. It works across the main exposure points, data at rest, data in transit, and data in use, so stolen copies usually turn into useless ciphertext.

The strongest takeaway is simple, key management decides whether encryption actually protects you. With keys protected, rotated, and tied to the right access, you reduce breach impact and support privacy and compliance expectations.

Next step, review your cloud settings today. Confirm encryption is enabled by default, TLS is enforced, and your keys live in a separate key service with tight access controls. Then share this post with your team and ask them to check one account or bucket together.

What part of encryption feels hardest for you right now, keys, coverage, or “data in use” controls? Strong cloud protection starts with making encryption real, not just configured.